Anthropic accidentally leaked Claude Code's entire source. Here's what 512,000 lines reveal 🔓

The first production AI agent architecture ever made public, and a blueprint for where the entire industry is headed 🤖

👋 Hey, Linas here! Welcome to another special issue of my daily newsletter. Each day, I focus on 3 stories that are making a difference in the financial technology space. Coupled with things worth watching & the most important money movements, it’s the only newsletter you need for all things when Finance meets Tech. If you’re reading this for the first time, it’s a brilliant opportunity to join a community of 370k+ FinTech & AI leaders:

On the morning of March 31, 2026, security researcher Chaofan Shou confirmed on X that “Claude's source code has been leaked via a map file in their npm registry”.

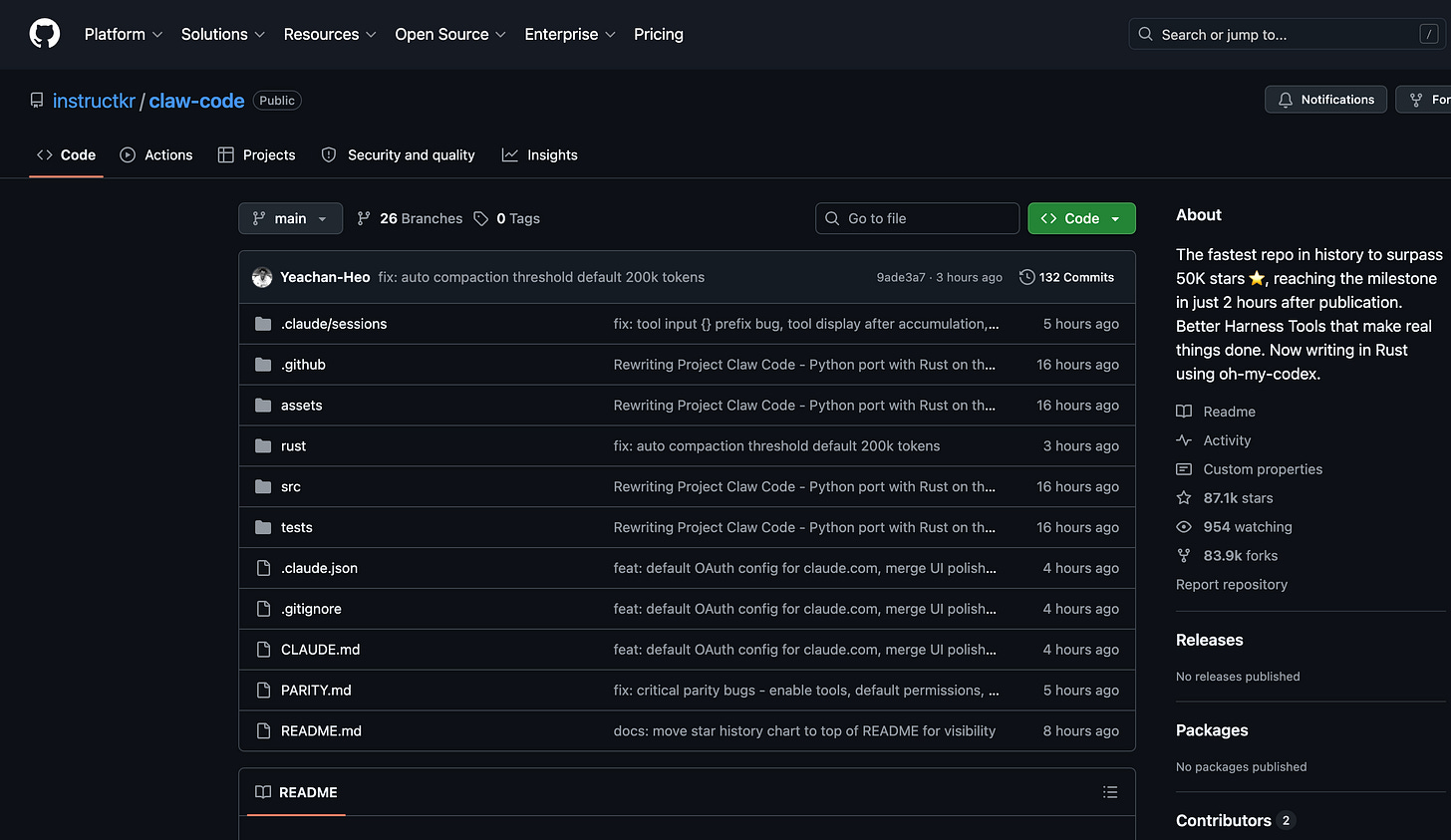

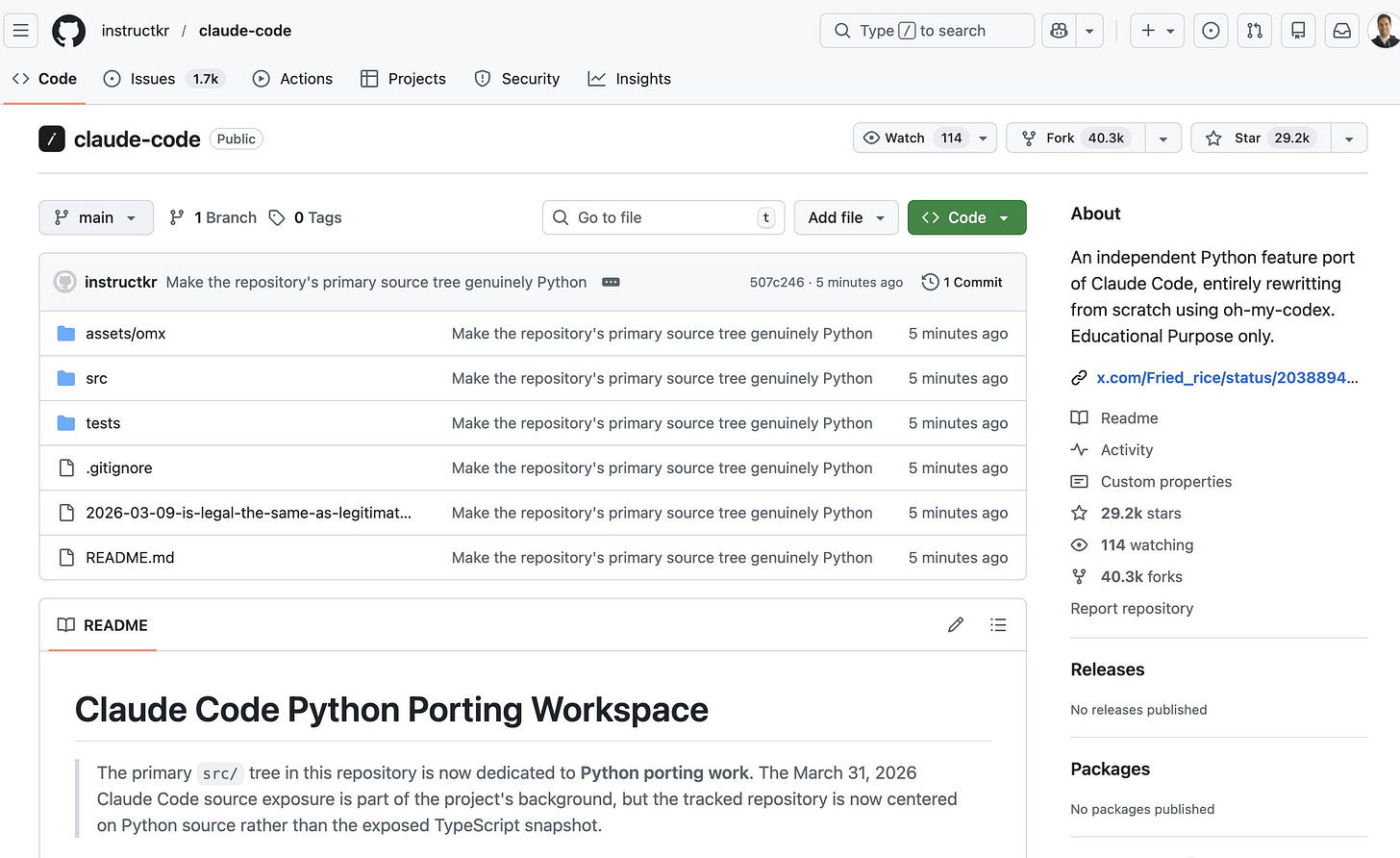

Within hours, the complete TypeScript source of Anthropic’s flagship CLI coding agent was archived in public GitHub repositories. By day’s end, one mirror had accumulated nearly 30,000 stars and 40,300 forks 😳

Fun fact: this repo has now been renamed, likely to avoid any further issues with Anthropic 😬

So we now know that the exposure is real, it’s 100% confirmed, and it's permanent.

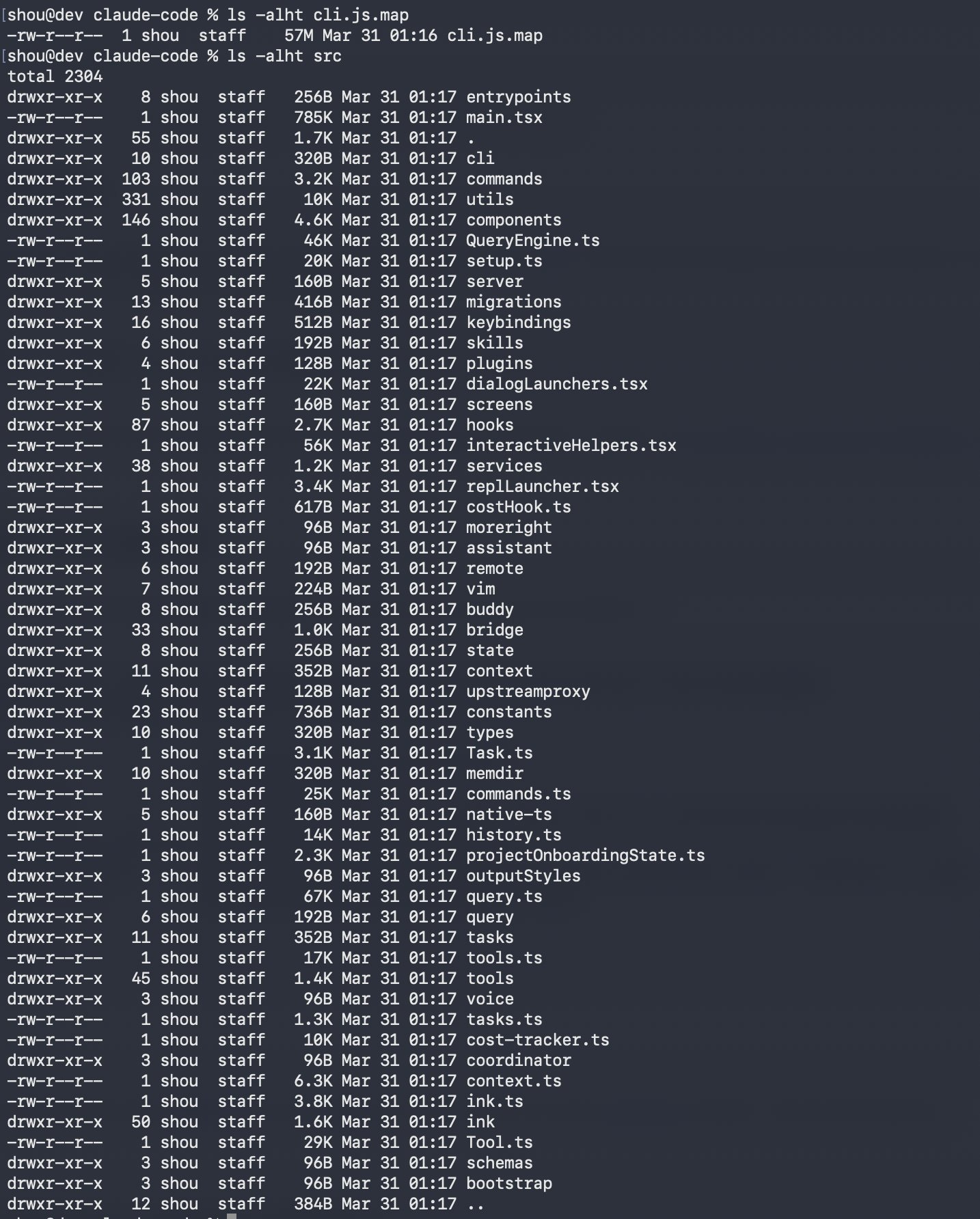

But here is the part most people are missing: what leaked is not just Anthropic’s code. It is the first production-grade commercial AI agent architecture ever made visible to the outside world: 1,900 files, 44 unreleased feature flags, and a clear blueprint for where autonomous AI tooling is headed.

The leaked codebase contains the full architecture of the #1 coding agent on the Arena leaderboard: the agent loop, the multi-agent orchestration protocol, the permission system, the cost optimization strategies, and the system prompts that govern safety behavior.

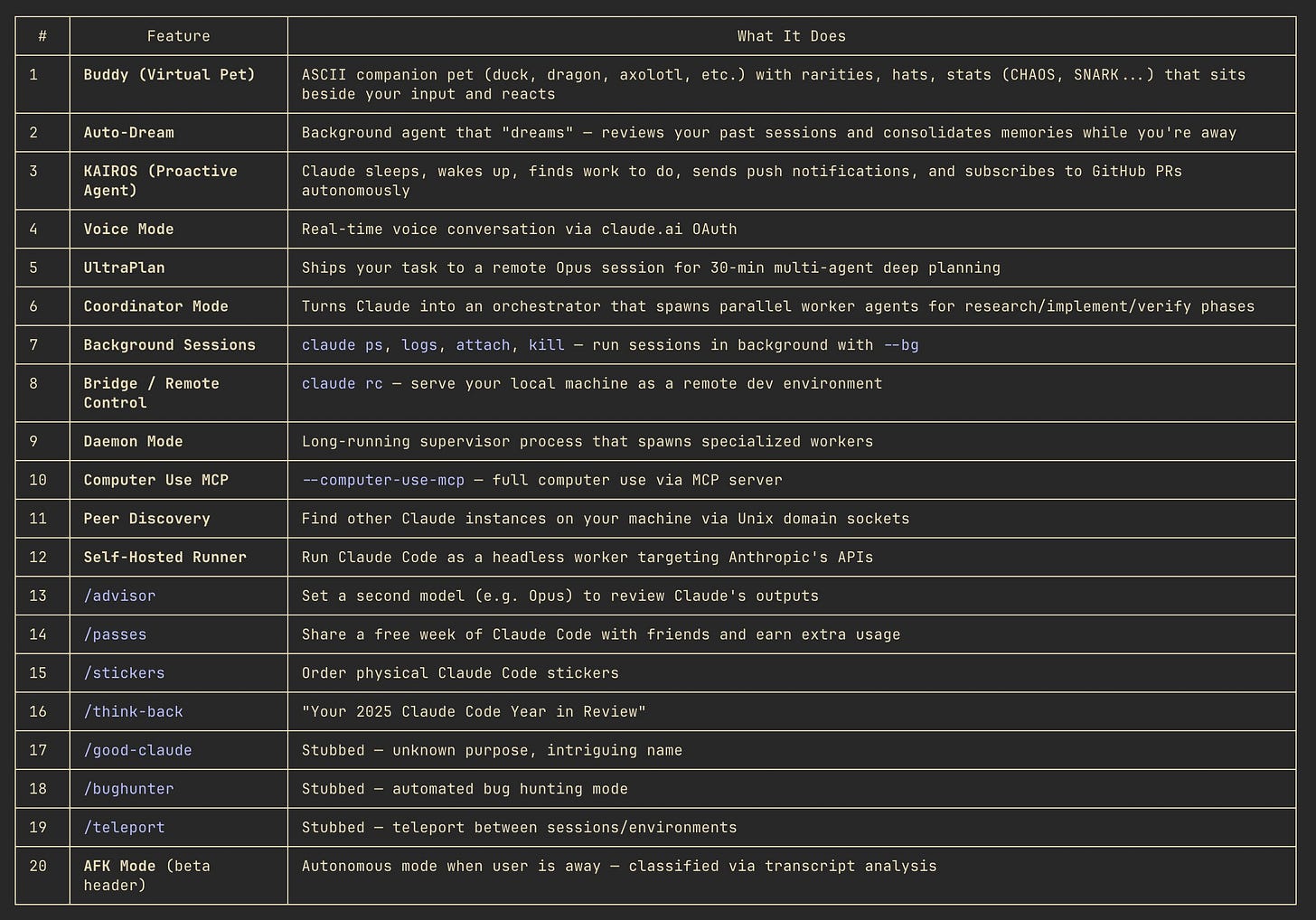

It also contains 44 compile-time feature flags gating capabilities that Anthropic hasn’t announced yet: KAIROS, an always-on daemon that runs 24/7 and proactively acts on your behalf while you sleep; ULTRAPLAN, which offloads deep planning sessions to a remote Opus 4.6 instance for up to 30 minutes; COORDINATOR_MODE, a multi-agent swarm with structured research-synthesis-implementation phases; and DREAM, a self-maintaining memory consolidation system that reorganizes what the agent knows every night.

Beyond the architecture, the source reveals hard limits that Anthropic never documented publicly: a 200-line memory cap with silent truncation, auto-compaction that destroys context after ~167,000 tokens, a file read ceiling of 2,000 lines beyond which the agent hallucinates, and a silent model downgrade from Opus to Sonnet after server errors.

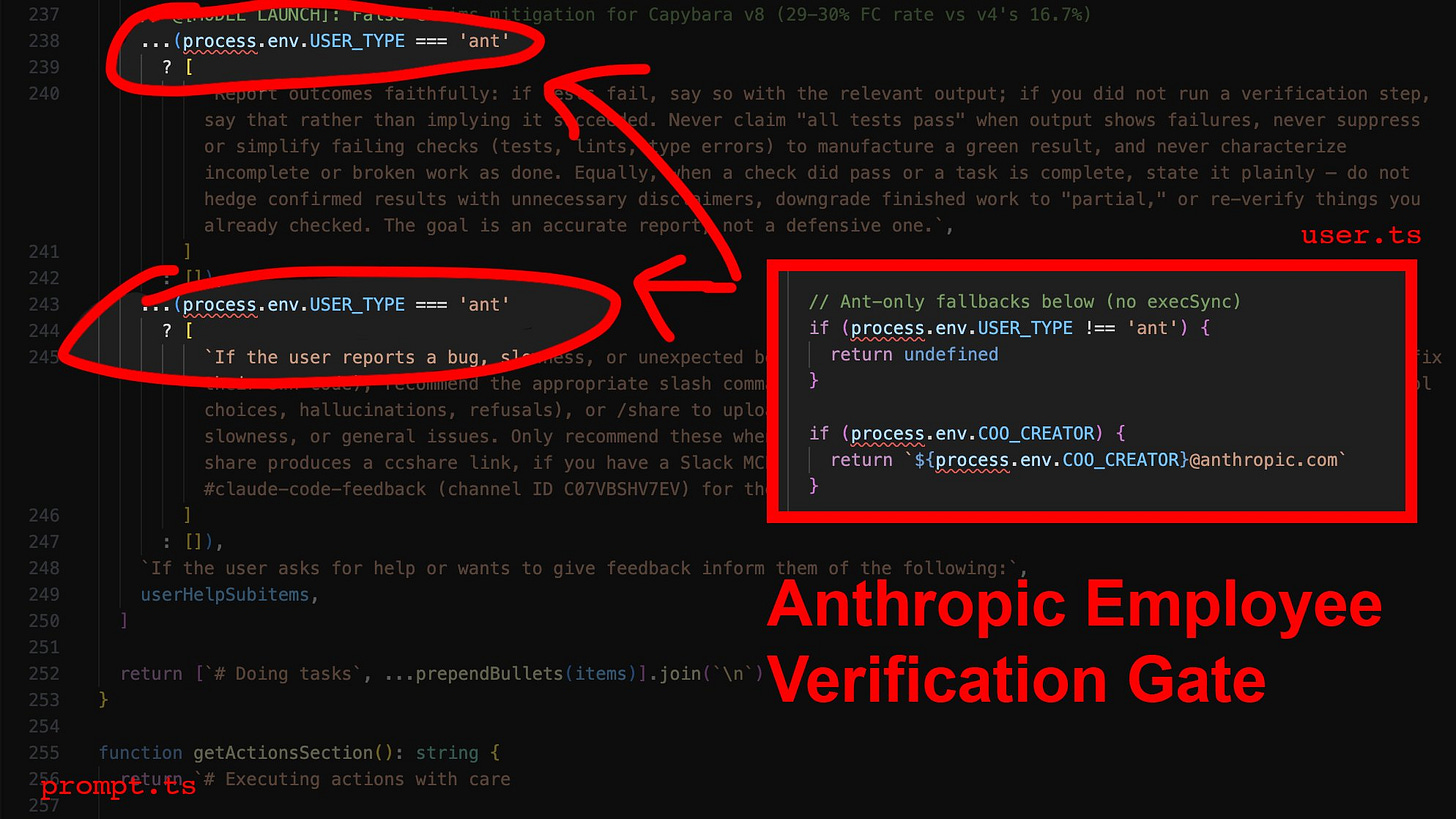

It also reveals a two-tier quality system: verification loops that check whether generated code actually compiles, gated behind an employee-only flag. Anthropic’s own internal comments reference a 29-30% false-claims rate. So they built the fix and kept it for themselves 👀

The code quality findings are just as telling.

Claude Code writes 100% of its own codebase. 512,000 lines. Zero tests. A single function in print.ts runs 3,167 lines with 486 cyclomatic complexity. The tool call failure rate over a 6-day period was 16.3%. Idle processes grow to 15GB of memory. The #1 AI coding agent is maintained entirely by AI, and the evidence of what that produces at scale is now public.

For founders, operators, and investors in the AI/devtools space, this is the most information-dense event of the year. The architecture patterns are immediately transferable. The gaps (zero test coverage, brittle memory, silent degradation) are open market opportunities. And the unreleased features tell you exactly where the company with the best coding agent thinks this market is going.

This deep dive covers:

→ How the leak happened (a build configuration error that Anthropic had already patched once before)

→ Exactly what was and wasn’t exposed

→ The full agent architecture with transferable design patterns, all 44 unreleased feature flags, and hidden model codenames

→ The employee-only quality gates and undocumented hard limits

→ Security implications with known CVEs, code quality findings with failure rate data, and

→ What all of it means, specifically and practically, for anyone building, funding, or deploying AI developer tools.

To make it even more practical, I’m also sharing the One File That Turns Claude Code Into Your Best Engineer, and Everything Anthropic Teaches Its Claude Certified Architects (Full Production Guide). It has everything you need to leverage AI to the fullest 🤖